So back in June I challenged myself to write a BASIC interpreter in a weekend. The next time I mentioned it was to admit defeat. I didn't really explain in any detail, because I thought I'd wait a few days and try again and I was distracted at the time I wrote my post.

As it happened that was over four months ago, so clearly it didn't work out. The reason for this was because I was getting too bogged down in the wrong kind of details. I'd got my heart set on doing this the "modern" way:

- Write a lexer to spit the input into tokens

LINE-NUMBER:10,PRINT,"Hello, World"

- Then I'd take those tokens and form an abstract syntax tree.

- Finally I'd walk the tree evaluating as I went.

The problem is that almost immediately I ran into problems, my naive approach didn't have a good solution for identifying line-numbers. So I was too paralysed to proceed much further.

I sidestepped the initial problem and figured maybe I should just have a series of tokens, somehow, which would be keyed off line-number. Obviously when you're interpreting "traditional" BASIC you need to care about lines, and treat them as important because you need to handle fun-things like this:

10 PRINT "STEVE ROCKS"

20 GOTO 10

Anyway I'd parse each line, assuming only a single statement upon a line (ha!) you can divide it into:

- Number - i.e. line-number.

- Statement.

- Newline to terminate.

Then you could have:

code{blah} ..

code[10] = "PRINT STEVE ROCKS"

code[20] = "GOTO 10"

Obviously you spot the problem there, if you think it through. Anyway. I've been thinking about it off and on since then, and the end result is that for the past two evenings I've been mostly writing a BASIC interpreter, in golang, in 20-30 minute chunks.

The way it works is as you'd expect (don't make me laugh ,bitterly):

- Parse the input into tokens.

- Store those as an array.

- Interpet each token.

- No AST

- No complicated structures.

- Your program is literally an array of tokens.

I cheated, horribly, in parsing line-numbers which turned out to be exactly the right thing to do. The output of my naive lexer was:

INT:10, PRINT, STRING:"Hello World", NEWLINE, INT:20, GOTO, INT:10

Guess what? If you (secretly) prefix a newline to the program you're given you can identify line-numbers just by keeping track of your previous token in the lexer. A line-number is any number that follows a newline. You don't even have to care if they sequential. (Hrm. Bug-report?)

Once you have an array of tokens it becomes almost insanely easy to process the stream and run your interpreter:

program[] = { LINE_NUMBER:10, PRINT, "Hello", NEWLINE, LINE_NUMBER:20 ..}

let offset := 0

for( offset < len(program) ) {

token = program[offset]

if ( token == GOTO ) { handle_goto() ; }

if ( token == PRINT ) { handle_print() ; }

.. handlers for every other statement

offset++

}

Make offset a global. And suddenly GOTO 10 becomes:

- Scan the array, again, looking for "LINE_NUMBER:10".

- Set

offsetto that index.

Magically it all just works. Add a stack, and GOSUB/RETURN are handled with ease too by pushing/popping the offset to it.

In fact even the FOR-loop is handled in only a few lines of code - most of the magic happening in the handler for the "NEXT" statement (because that's the part that needs to decide if it needs to jump-back to the body of the loop, or continue running.

OK this is a basic-BASIC as it is missing primtives (CHR(), LEN,etc) and it only cares about integers. But the code is wonderfully simple to understand, and the test-case coverage is pretty high.

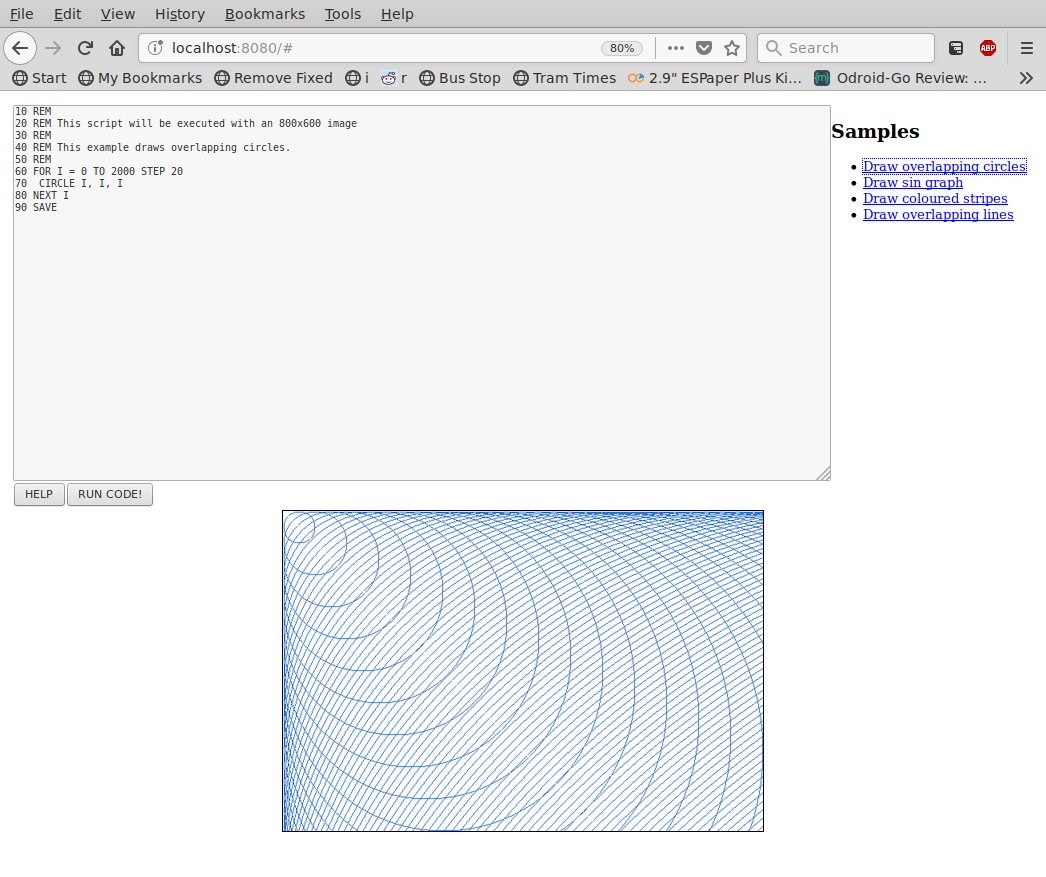

I'll leave with an example:

10 REM This is a program

00 REM

01 REM This program should produce 126 * 126 * 10

02 REM = 158760

03 REM

05 GOSUB 100

10 FOR i = 0 TO 126

20 FOR j = 0 TO 126 STEP 1

30 FOR k = 0 TO 10

40 LET a = i * j * k

50 NEXT k

60 NEXT j

70 NEXT i

75 PRINT a, "\n"

80 END

100 PRINT "Hello, I'm multiplying your integers"

110 RETURN

Loops indented for clarity. Tokens in upper-case only for retro-nostalgia.

Find it here, if you care:

I had fun. Worth it.

I even "wrote" a "game":

Tags: basic, golang, interpreter 2 comments